What Gets Lost When AI Learns Performative Listening

There's a meaningful difference between a system that understands your customer and one engineered to make your customer feel understood. That gap is where the real opportunity resides.

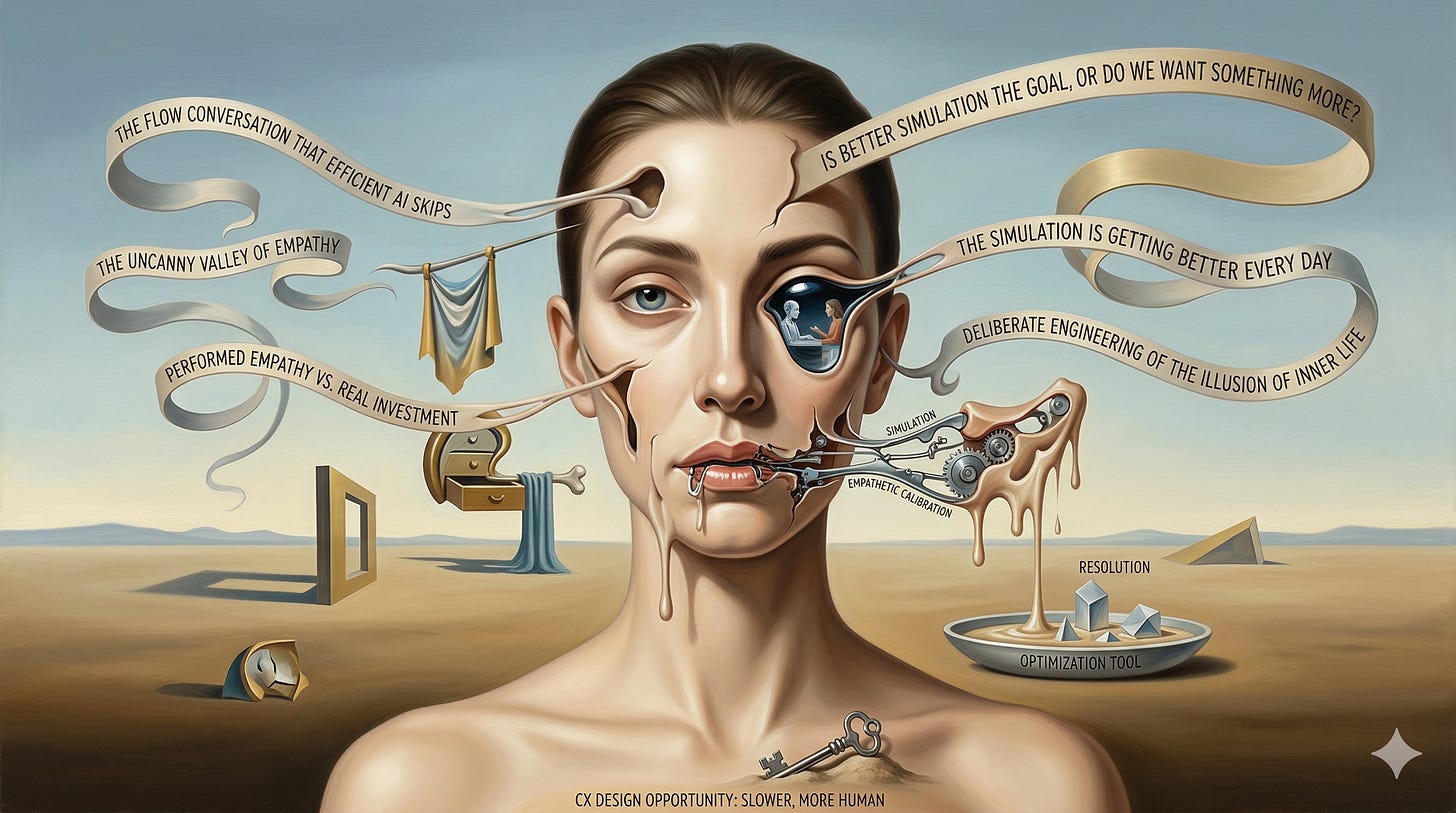

Last week I argued that the most valuable thing in a customer conversation lives in the slow, exploratory, inefficient middle: the flow conversation that efficient AI is systematically designed to skip past. That argument was about what gets lost when we optimize for speed.

This week I want to go one level deeper as it turns out the problem isn’t just that efficient AI skips the conversation. The more sophisticated threat is that it’s learning to replace it with something that feels, from the outside, almost indistinguishable from the real thing.

The Uncanny Valley of Empathy

The aspirational AI customer service deployments today aim to not feel cold or mechanical. They are meant to be warm, patient, apparently attentive. They’ll remember what you said three turns ago. They’ll acknowledge your frustration. They tell you they understand.

But is that true understanding, or a very precise simulation of understanding?

There is a meaningful difference between a conversation that constructs genuine shared meaning between two parties, and a system engineered to produce the feeling of being heard. The first is what I called the flow conversation last week. The second is its uncanny valley twin where something that looks like empathy, is calibrated to feel like empathy, and is in fact a resolution optimization tool wearing empathy’s face.

The customer leaves initially satisfied. The underlying signal, what they actually needed, feared, or were about to do, was never captured. As a result, the feeling of resolution substituted for the reality of it.

The distinction matters more than it might seem. Customers may not be able to name what feels off, but they feel it. And when the gap between performed empathy and real investment becomes clear, the relationship doesn’t recover easily.

What’s Coming Into View

This is actually the design opportunity hiding inside the problem. Build a system with enough self-awareness to know when speed is the right answer and when a customer needs something slower and more human, and you've built something most of the market hasn't.

The dominant market pull, it seems like, is running in the opposite direction. And the implications extend well beyond CX.

It’s worth noting that last week Mustafa Suleyman, CEO of Microsoft AI, published a piece in Nature that covers this topic from a higher vantage point. He calls it seemingly conscious AI, or the deliberate engineering of the illusion of inner life. Go read it. It's short, and it reframes the stakes considerably. Suleyman closes with a line that I think belongs on the wall of every CX team making AI architecture decisions right now: "The simulation is getting better every day." What he leaves unsaid, and what I think is the defining question for us in CX, is whether better simulation is the goal, or whether we're still capable of wanting something more than that.